The organic blend of our tangled relationships with different pieces of technology is the hallmark of a human being of recent vintage.

This year’s I/O was no exception in the way new groundbreaking technologies are revealed these days. It’s no longer a one-man show that even charged with the most exciting content can’t guarantee a response simply due to the shrinking attention span that our generation has today.

I/O is a major developer conference run by Google and focusing on Android, Google’s cloud-based software, applications, design, development, and much much more. The diversity of topics covered and speakers presenting the technologies leaves no space to zone out. In my opinion, one of the reasons for that is an easily distinguishable point that permeated every single day and presentation at I/O this year.

That point was Artificial Intelligence.

The amount of work done since last year when Google AI was first announced, is insane. Fast forward all the talks about the importance of innovation, technology-driven progress, international opportunities, better lives for people around the world, and all that… and you’ll get to the core. In the next couple of years, Google plans to completely dismantle the way digital industry affects our daily lives.

Even though most of the talks about international aspirations of Google are gamesmanship, I believe the intentions are fair. It just feels like the world is not ready for self-driving cars yet.

Technologies are exclusive. We are nowhere near a point where they become inclusive.

However, aspirations is where we thrive. Our mind never stops. Despite the massive stratification of the world, advances in everyday consumer electronics is what we all have in common. To this effect, Google is a titan. Luckily, it’s a good titan and it doesn’t use its powers to destroy the world. Hope that AI doesn’t fall victim to the comic book induced villainy though.

So what’s the big deal?

As the keynote progressed, I was quite intrigued by the seriousness of the subject risen early on, concerning the AI application capabilities in healthcare. We’ve been collecting digital data ever since computers first hit mass production. Today the amount of data machines are capable of storing and processing is just staggering. The only problem we have is data validation. We are not quite there yet to delegate important life-critical decisions to computers. Well, apparently now there’s a light at the end of the tunnel.

Google will now push the AI and its deep learning capabilities to detect patients’ upcoming medical issues/events far better than any human doctor.

Okay, can’t really imagine how this will go down. Does it mean the doctors will no longer need to study for roughly 10 years to be competent? I’m still two-minded about it and I believe, we’ll have to see the symbiosis before things will actually change. The first major application of AI in healthcare will be in digital pathology, specifically predicting medical events.

So if Google AI is already powerful enough to analyze medical data, evaluate chances, and predict diagnosis, what can it do to a more conventional mass of data like our daily interactions with Google mail?

This is where it got even more interesting. I have autocorrect and predictive text both off on my iPhone. It’s just annoying. In no circumstances do I ever misspell the word ‘duck’ and need it to be corrected. However, the I/O-revealed Smart Compose which is supposed to be smarter than predictive text which is not capable of understanding the context.

Image credit: Googblogs

Even though Smart Compose is yet far from being everyday smart, it can save you a lot of pain replying to homogenous emails with no option to copy and paste.

Let’s wait and see if Google AI can learn more about your style of communication, your favorite types of jokes and puns, data about the events in your life collected from other apps. If not useful, this will definitely be funny.

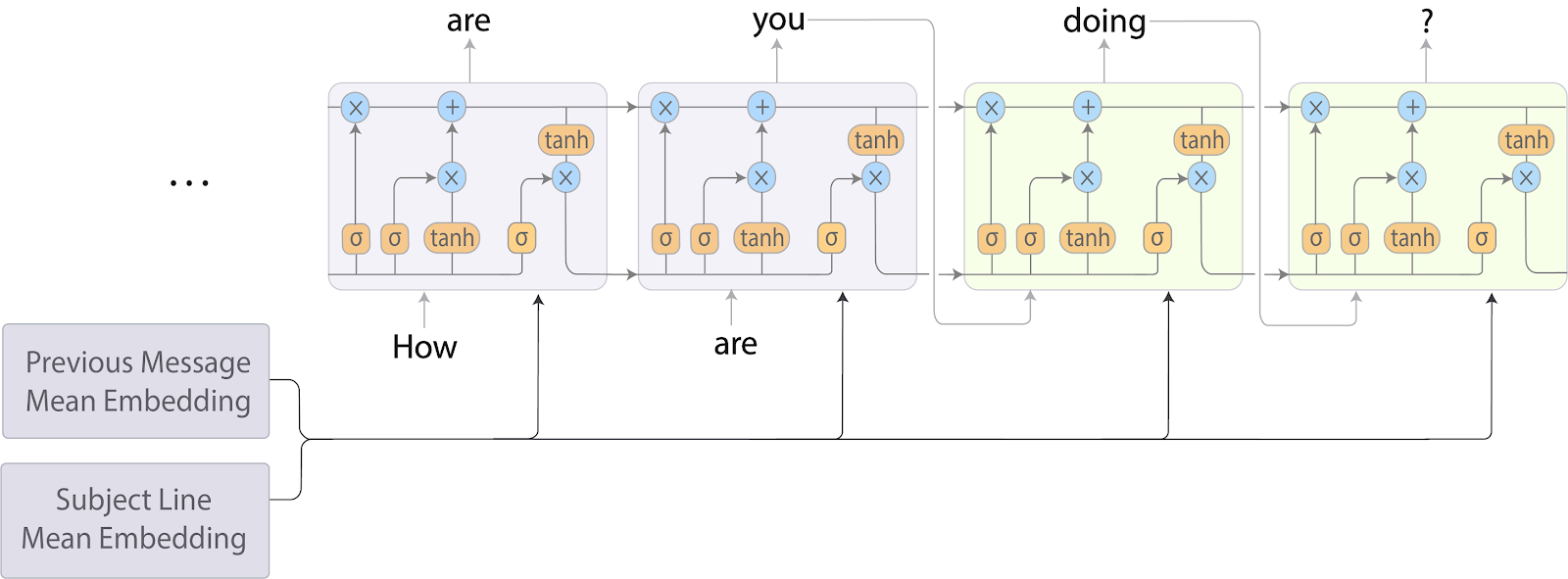

Image credit: Googblogs

Smart Compose RNN-LM model architecture. Subject and previous email message are encoded by averaging the word embeddings in each field. The averaged embeddings are then fed to the RNN-LM at each decoding step.

The biggest thing about this year’s I/O though, came in the announced Google Assistant improvements. It has a more natural voice now due to the technology that imitates the mechanisms of human voice formation, called Wavenet.

It is now capable of staying within the conversation without cutting it into meaningful pieces through “Hey, Google” separators. For the first time, Google is tackling ethics and digital wellbeing issues through the technology they call Pretty Please.

Before the internet kids were taught different styles of language. With grown-ups, you have to be polite and with the peers, friendly. But how do you teach them to communicate with something like a digital assistant? Or your fridge that has IoT? Is it now Mr. Fridge? Folks at Google know what friendly means.

Nurturing and supporting positive vibes when communicating with AI is an important area, as technology is often frowned upon by the parents because it doesn’t require any fair effort from a child rather than formulating a query in any language manner.

Another huge announcement made by the Google CEO, Sundar Pichai was the demo of Google Duplex. It is an experimental voice AI system that Google has been investing into for the past two or so years. The demo was the first wow effect at the conference and it was one for a reason.

The Duplex-run AI assistant just engaged a human into a completely natural conversation, apparently without them even knowing they were talking to a robot. Wow.

The conversation was successful. The mission was accomplished. The AI finally revealed itself as a real Ex Machina-type entity. The “Mmm-hmm.” was particularly creepy. We like to think there is no way a robot could fool us into thinking it is a real person. Fair enough, if you set yourself to giving it a real test, it will fail epically.

In future, this might be an issue of concern for us. After you had a talk on the phone or a back and forth texting with someone for the first time, you might well be wondering whether it was a robot.

Image credit: Prospero_Arto

But before that, there will have to be a lot of deep learning done by the AI. The kind of learning we have just seen Google lifted the veil on.

What makes us think we can sniff out a robot talking?

The same perception mechanisms that help us discern handwriting from the calligraphic typeface. The tiny imperfections, inconsistencies, alogisms, the WTFs, and so on. We know our personal selves. That’s why we relate to other humans in the way they speak. AI in its turn takes everything from someone or something from everyone. It relies on algorithms. They are deep, multifaceted, and logical but they are algorithms. Where we use intuition, AI uses dependencies. Where we rely on gut feeling, robots use math.

As part of the build-up, the I/O showed Wavenet and the depth of the sound breakdown it makes to mimic the pitch, micropauses, defects, and specific aspects of human speech formation.

Duplex showcased its understanding of interjections and hesitations which in linguistics, serve as natural fillers. In other words, human speech loading animations.

Image credit: Lahesh Kavinda

What’s even cooler is the way Duplex is able to surf the conversation with all of its confusing turns. The second call demonstrated at the conference had it all. A person of foreign origin with an accent, confusion with numbers like we often have when on the phone, a little bit of miscommunication, Duplex got through it all like a boss.

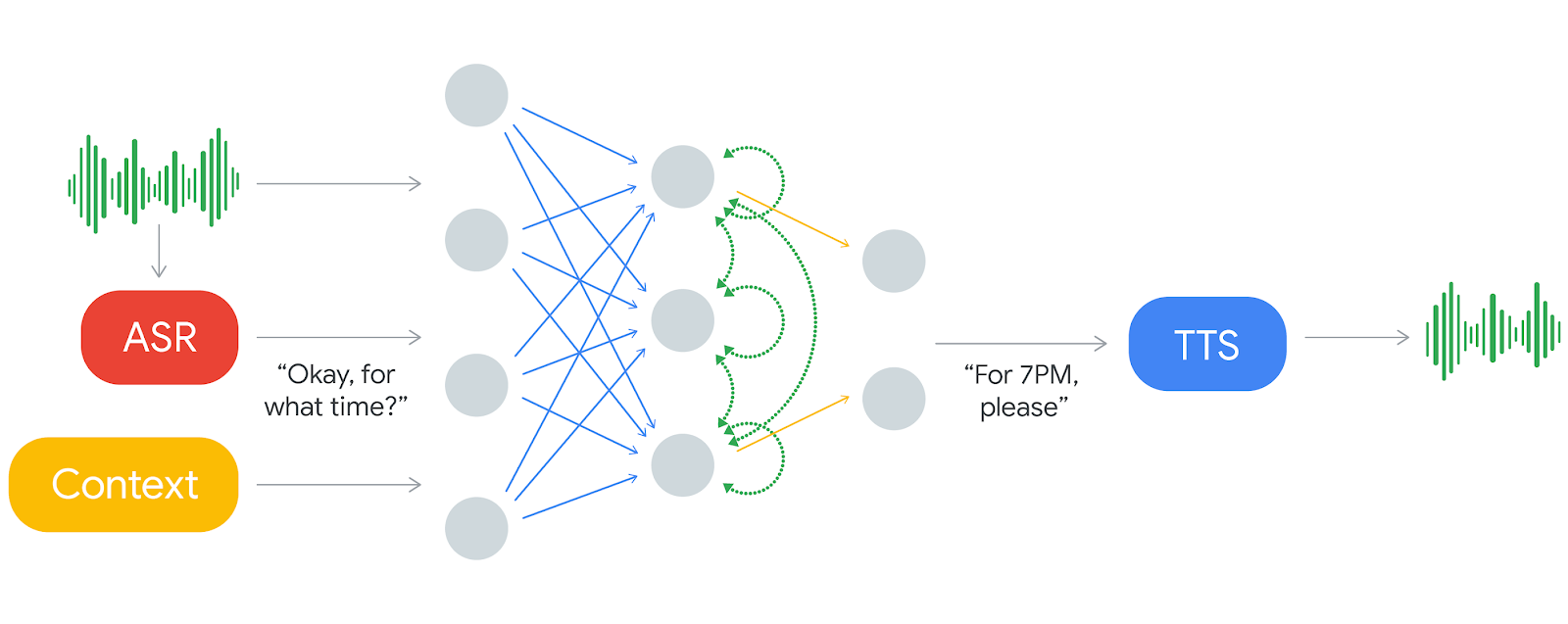

Duplex is based on a recurrent neural network (RNN) that uses the power of the TensorFlow general-purpose machine learning platform to deal with the real-world implications of natural human conversations. The volume of data processed through Google presents really boundless opportunities to train the AI on not only in comprehension but also in sounding natural. For that, Duplex uses a “combination of a concatenative text to speech (TTS) engine and a synthesis TTS engine (using Tacotron and WaveNet) to control intonation depending on the circumstance.”

Image credit: Google AI Blog

Going back to the question of ethics, this can be a game changer in the way we handle phone calls, no social awkwardness, no confusion. Just a goal to be accomplished and a wide variety of paths to do so. Another thing is what happens when two AI-powered robots have a phone conversation? Because that’s what’s going to happen. With obvious benefits for businesses that are heavy on:

- Real-time appointment bookings.

- Delegated communications with service providers.

- Shopping.

- Filing complaints.

- Reporting issues.

In the future. As of now, Google AI is helpful in cutting the UI out of our daily routines. I can see how this becomes a reality in the next couple years. The impressions from this year’s I/O will eventually fade giving way to the hardware and software advances of Google’s competition. The advances in AI will wither only to return stronger next year.

All I know, this is the beginning of an incredible journey that with no real hardware limitations, will take us into the deep waters of machine intelligence.